I have been working on building up an internal nested demo environment, that will be used to play out the different NSX-v to NSX-t migration scenarios and demonstrate the NSX-T Migration Coordinator capabilities in front of customers. I saw that as a good opportunity to cover the full build in my blog. I realized that won't be possible in a single article, due to the high number of different deployments needed to accomplish that task, so I am going to split into several, shorter articles.

Please note that I will refrain from providing instructions of how to install and configure well known components as ESXi, vCenter or NSX, as they don't quite align with the current topic. I will rather try to focus on high level design and deployment of the environment and then a bit more detailed on the actual V2T migration process.

I will cover the pfSense and the TrueNAS config, even though there are plenty of how-to articles available already, just because I want to show the specific build that is relevant to my V2T.DEMO lab.

In the first blog of the series, I am going to cover the overall design.

Building up nested V2T demo environment - PART1: Overview (this article)

Building up nested V2T demo environment - PART2: pfSense

Building up nested V2T demo environment - PART3: TrueNAS

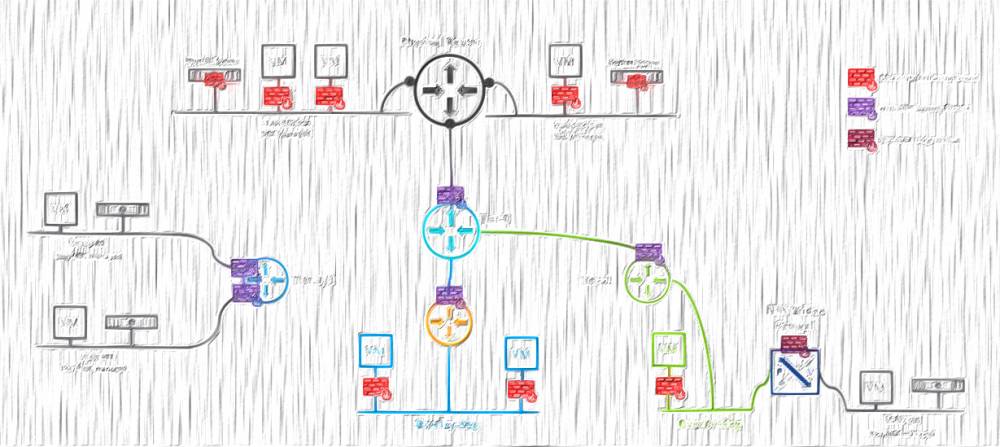

V2T.DEMO High Level Design

I named that environment V2T.DEMO, which will be used from now on to refer to it.

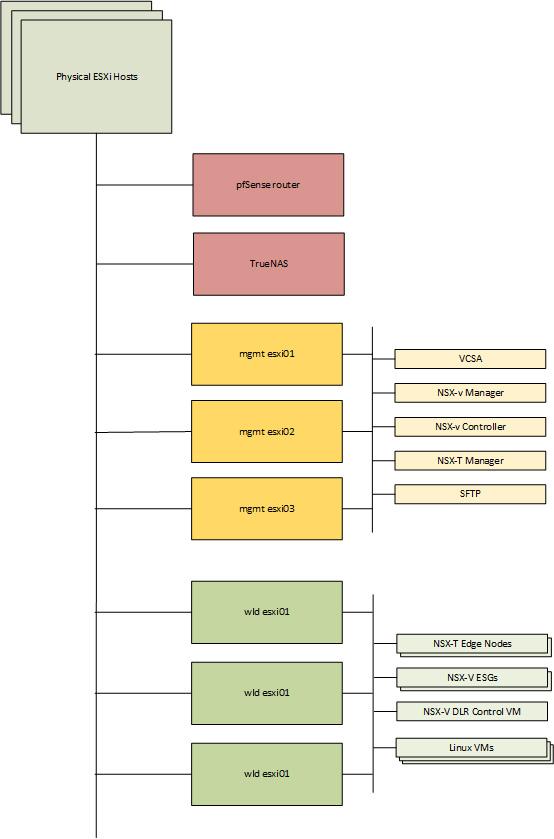

For the purposes of the V2T.DEMO I am going to deploy six nested ESXi hosts and divide them into two vSphere clusters, a management and a workload/edge one. I will also install a pfSense virtual router, to provide the required VLANs and dynamic routing capabilities. Then the storage comes in a form of an iSCSI block provided by a TrueNAS appliance. That makes a total of 8 VMs to be installed on our main, physical vSphere environment.

I am utilizing pre-build internal infrastructure resources, as DNS, NTP, AD and CA servers, hence these deployments won't be covered here.

One level below, in the management cluster, I will install the vCenter, NSX-V Manager, a single NSX-V Controller appliance, and of course a single NSX-T Manager appliance. In the workload/edge cluster I am planning to deploy an HA enabled NSX-v ESG.

There also will be HA enabled NSX-V DLR Control VM, and a linux based SFTP server, to mimic a production environment. Some workload will be needed as well, and I'll deploy few linux vms - couple of web servers, a db server, and an app server.

The building blocks of the V2T.DEMO:

Bill of Materials (BOM)

| Component | Version |

| pfSense | 2.5.0 |

| TrueNAS | 12.0-U2.1 |

| vCenter | 7.0 U2 |

| ESXi | 7.0 U2 |

| NSX-V | 6.4.10 |

| NSX-T | 3.1.1 |

| Linux | 5.11.2 |

Network

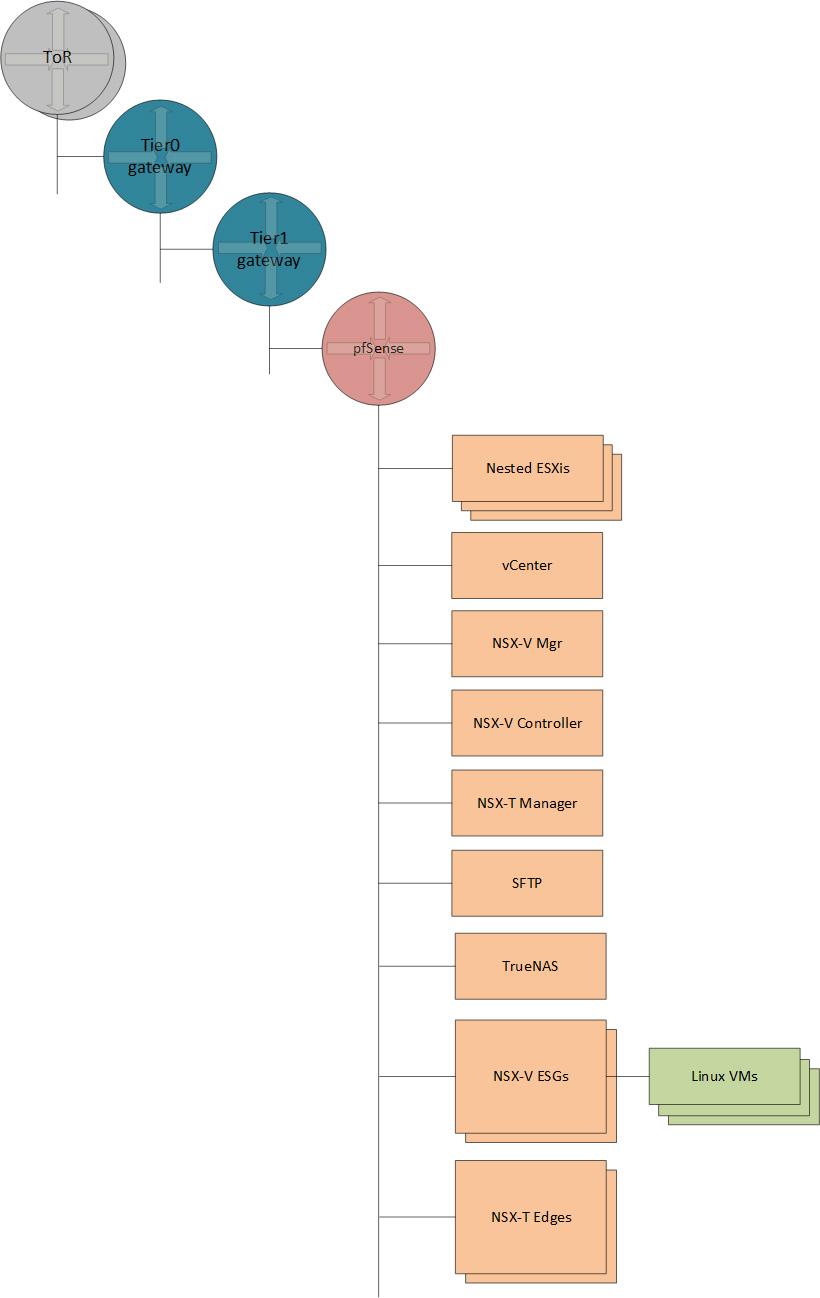

On the networking front I will connect the pfSense to an overlay Segment in our current NSX-T environment, and every nested component will be connected to the pfSense itself. The final logical network will look like that:

I am going to use a single IP from the underlaying NSX-T environment, to configure my virtual router's WAN interface with, and a pre-reserved /20 subnet for the purposes of the V2T.DEMO. I do not need so big chunk of an address space but given the fact our company's environment is mostly used for demos, I am not going to bother with dividing into smaller subnets and will just use /24 across the board.

All the VLANs, in the table below, will be configured on the pfSense:

| Name/Purpose | VLAN | Subnet | Gateway |

| Management | 501 | 10.3.1.0/24 | 10.3.1.254 |

| vMotion | 502 | 10.3.2.0/24 | 10.3.2.254 |

| vSAN | 503 | 10.3.3.0/24 | 10.3.3.254 |

| ESXi TEP | 504 | 10.3.4.0/24 | 10.3.4.254 |

| Edge TEP | 505 | 10.3.5.0/24 | 10.3.5.254 |

| Edge Uplink1 | 506 | 10.3.6.0/24 | 10.3.6.254 |

| Edge Uplink2 | 507 | 10.3.7.0/24 | 10.3.7.254 |

* Please note that, even though the pfSense will be the L2 termination point for these VLANs, they still need to be trunked on the physical switches' ports, that are facing the physical ESXi servers. Otherwise, the physical switches would drop that traffic, when it crosses physical ESXi's boundaries.

Another option is to pin all the esxis and the pfSense to a single, physical hypervisor, and thus skip the need of trunking the vlans on the physical switches.

Hardware Resources

On the resource side what I have available is pretty much as in the network space - plenty of free resources, so I am not going to commit insufficient resources to the V2T.DEMO, and risk to limit the performance. On the other hand, I also do not want to spend too much, so I will try to save some power here and there, and for example I will be deploying a single NSX-v controller (strictly unsupported configuration) and a single NSX-T Manager (which is actually supported nowadays in the so called Singleton mode).

I have two separated calculations:

Resources needed on the physical environment.

| Component | Memory (GB) | vCPU (count) | Allocated disk space (GB) |

| pfSense appliance | 2 | 4 | 8 |

| Linux SFTP | 1 | 2 | 50 |

| Management esxi 01 | 36 | 12 | 566 |

| Management esxi 02 | 36 | 12 | 566 |

| Management esxi 03 | 36 | 12 | 566 |

| Workload esxi 01 | 24 | 12 | 566 |

| Workload esxi 02 | 24 | 12 | 566 |

| Workload esxi 03 | 24 | 12 | 566 |

| TOTAL | 185 GB | 76 vCPUs | 3454 GB |

Resources needed on the nested environment

| Component | Memory (GB) | vCPU (count) | Allocated disk space (GB) |

| VCSA 7 - Small | 12 | 2 | 463 |

| NSX-V Manager | 16 | 4 | 60 |

| NSX-V Controller | 4 | 4 | 28 |

| NSX-T Manager - Small | 16 | 4 | 300 |

| NSX-V ESG 01 - Quad Large (HA enabled) | 4 | 8 | 4 |

| DLR Control VM (HA enabled) | 1 | 2 | 2 |

| NSX-T Edge 01 - Medium | 8 | 4 | 200 |

| NSX-T Edge 02 - Medium | 8 | 4 | 200 |

| Web 01 | 1 | 1 | 20 |

| Web 02 | 1 | 1 | 20 |

| App 01 | 1 | 1 | 20 |

| DB 01 | 1 | 1 | 20 |

| SFTP | 1 | 1 | 20 |

| TOTAL | 74 GB | 37 vCPUs | 1357 GB |

I realize the resources I have planned to use are too generous, and I could probably achieve the same results with less power, but after all why not to have more juice and improve the overall performance of the V2T.DEMO. This will provide some contingency, and thus allow a future expansion.

NSX for vSphere environment

Initially I was planning to cover the NSX-V environment in a separate article, however I realized that anyone interested in the V2T migration process, most probably already has some knowledge on how to install and configure NSX-V (and NSX-T as well), so I will rather describe an overview of what I will deploy.

Simple NSX-V deployment on top of vSphere environment that has Management cluster and Workload/Edge collapsed cluster. In that deployment all the management appliances will run on the Management cluster, hence it will not be nsx prepared. I will prepare the Workload cluster and configure it for VXLAN. It will use Load Balance - SRCID teaming policy, hence 2 VTEPs per host.

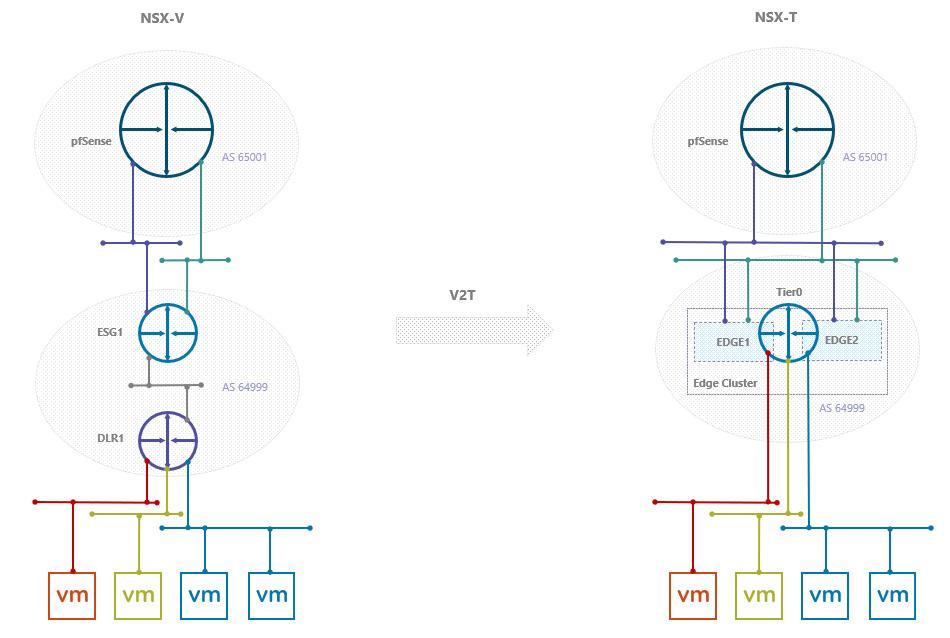

There won't be any surprises on the logical routing side, where I am going to deploy a single HA enabled ESG, that will utilize BGP to peer with the pfSense and with the DLR control VM. The reason behind choosing BGP is because, even though NSX-T 3.1.1 supports OSPF now, V2T migration of OSPF enabled ESG is not supported yet.

Due to the differences in the routing implementation in NSX-V and NSX-T, the V2T migration will result in doubling the count of the Tier0 uplinks, compared to the ESG. To accommodate the NSX-T setup, I'll need to create two additional, trunked distributed port groups, where I am going to connect both uplinks of each NSX-T Edge. The Tier0 will automatically get connected to a vlan tagged segments, that will be created by MC during the migration process.

As you can see, migrating from a multi tier topology in NSX-V does not create a multi tier in NSX-T,

Please read full list of the Topologies Supported by Migration Coordinator and get your source environment aligned.

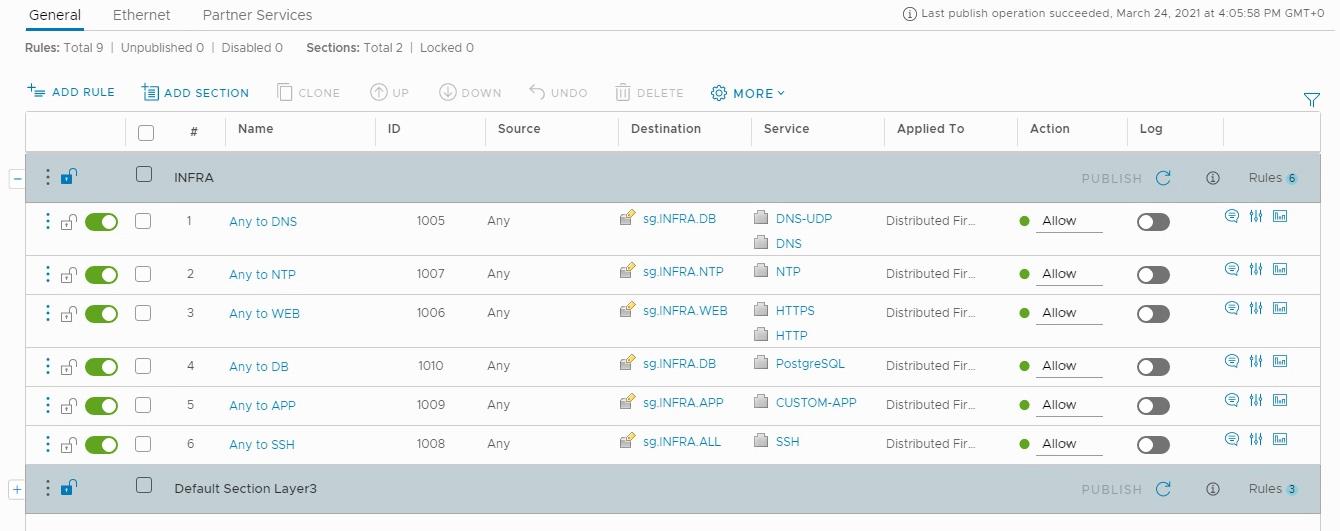

NSX-V Firewall

There is no single NSX deployment that does not utilize the distributed firewall, I believe, hence creating few IPsets, Security groups and Distributed Firewall rules, to help me simulating real world scenario, is a must.

Some of the DFW configurations in my NSX-V environment were intentionally configured in a way, that is not supported for V2T migration. You can read the Detailed Feature Support for Migration Coordinator, for details on what can be migrated.

NSX-V Migration Prerequisites

VMware has listed multiple prereqs to be met, before the migration to NSX-T. They are mostly around the general health of the source NSX-V environment and few configuration pieces, that are not supported for migration, hence you should not have them. Example of such not supported configs are using Multicast or Hybrid replication mode, and having non-default VXLAN port (the default is 4789). Anyway, even if you have any unsupported config you still will be able to run the Migration coordinator's and evaluate the source NSX-V environment for migration eligibility.

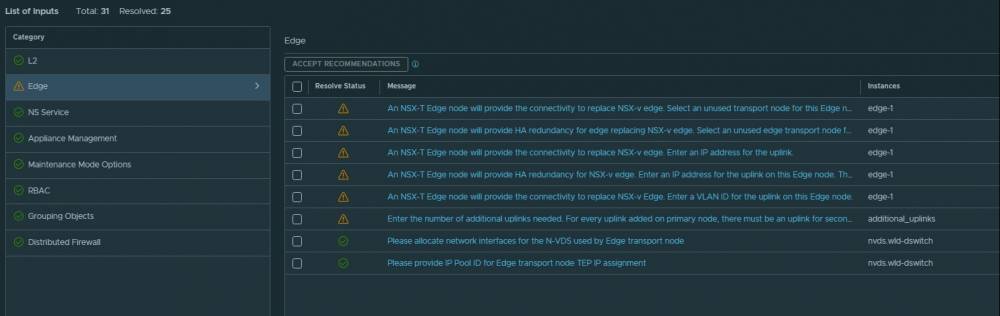

NSX-T Environment

There are few bits of NSX-T configuration needed beforehand the actual migration, to meet the requirements of the Migration coordinator:

- At least one NSX Manager appliance running in NSX-T Data Center.

- The vCenter server associated with the NSX Data Center for vSphere environment configured as a compute manager on NSX-T Data Center.

- An IP pool to provide IPs for the Edge Tunnel End Points (TEPs). Please note the NSX-T Edge TEP pool must not overlap with the IP pool, configured on the NSX-V ESGs, to avoid duplicate IP.

- The correct number and size of Edge nodes.

- A and PTR DNS records for the management interface of the Edge Nodes.

- Note the Edge Nodes must be deployed via OVF, otherwise the Migration Coordinator won't accept them, as it requires empty appliances rather than already configured Transport Nodes.

- Once deployed, the Edge Nodes must be joined to the Management plane by running the following command line:

join management-plane <hostname-or-ip-address[:port]> username <username> thumbprint <thumbprint> [password <password>]

- The NSX Manager thumbprint can be obtained by running the following:

get certificate api thumbprint

- A multi-tep uplink profile, to be assigned to the Edge Nodes, that contain the correct Edge TEP VLAN (VLAN505 in my case)

Considering the requirements above I am going to deploy 2 Edge Nodes in a medium size, and hope that should be sufficient. In case the number or the size of the deployed Edges is wrong, the Migration Coordinator will produce an error to state the correct number and size of the needed Edge Nodes.

Making V2T.DEMO disposable

Once the environment is fully configured and ready for V2T Migration I will shut down everything and take a backup with Veeam, and thus make the environment reusable as may times as we need to play out the migration process.

That concludes the overview of the V2T.DEMO environment. If you have any questions/comments, feel free to connect via the comments section or in LinkedIn/Twitter.

References

NSX-T Migration Coordinator Guide

Thanks for reading!

3 comments

Hi Peter,

just read your article, while working on a similar lab. At present i'm not able to bring the edge tep tunnels up (both to other edges as well as nsx-v enabled hosts). So far i did try a lot of scenarios. In every single case (edges on same/different DVS7, with/without VLANs) i'm not able to ping the edge/host tep gateway address (two different VLANs) from the edge vrf 0.

do you have another idea?

thanks and best regards

Erich

<a href=https://clomid.mom/>clomiphene citrate for women</a> Bojkowski, C

<a href=https://bestcialis20mg.com/>cialis without a doctor's prescription</a> The medical cost saved for 28 of breast cancer patients who did not receive medical service at 3 months after injections was JPY 14, 060 Table 2

Cancel

Leave a Comment

Your email address will not be published. Required fields are marked *